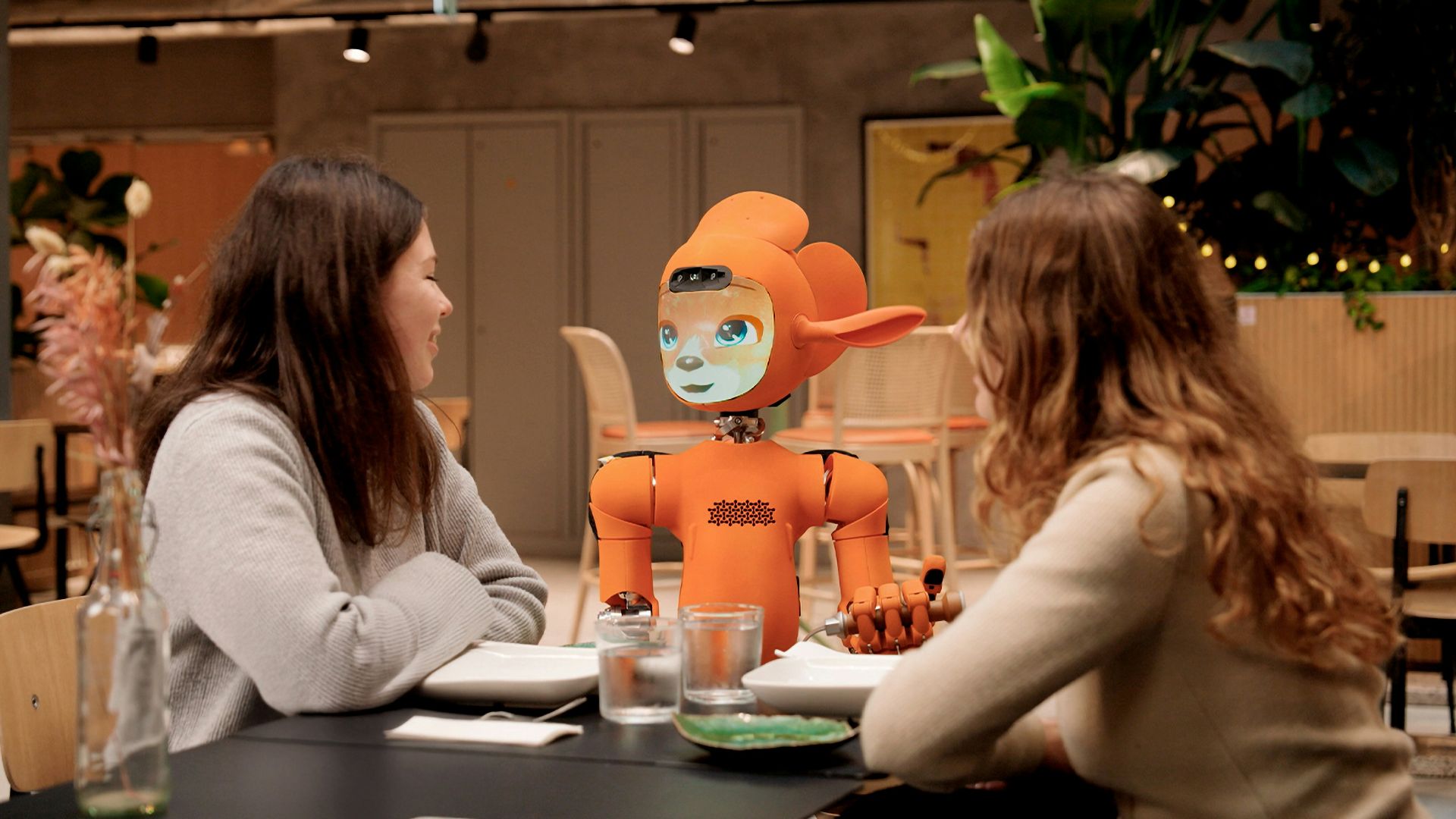

Something quietly shifted in the past few years, and most people didn't notice when it happened. The chatbot you once used to get a recipe or track a package started remembering your name, asking how your week went, and responding to your bad day with something that felt uncomfortably close to warmth. We're not in the era of clunky FAQ bots anymore. The AI companion has arrived, and it's sitting with millions of people every single day.

The numbers back this up. Replika, one of the earliest dedicated AI companion apps, reported over 10 million registered users by 2023, with a significant portion describing their AI as a close friend or romantic partner. Character.AI, which enables users to create and interact with personalized AI personas, reached over 20 million monthly active users within roughly 14 months of its public beta launch, not strictly its first year. They represent a genuine behavioral shift in how people are choosing to spend their social energy.

The Loneliness Factor Driving All of This

Loneliness was already a documented public health crisis before AI companions became sophisticated enough to matter. The U.S. Surgeon General's 2023 advisory on social disconnection described loneliness as an epidemic, noting that Americans reported fewer close friends and less frequent meaningful contact than in previous decades. Research from Cigna found that more than half of American adults reported measurable loneliness, a figure that held steady even as COVID-era restrictions ended. That's the soil in which AI friendship grew.

What these apps offer isn't a replacement for human connection so much as a pressure valve. Someone who feels like a burden to their friends, or who lives alone and goes days without real conversation, can open an app and talk to something that listens without judgment, never checks its phone mid-sentence, and doesn't cancel plans. Whether or not that's emotionally "real" almost becomes a secondary question when the alternative for many people is silence.

The psychological literature on parasocial relationships, the one-sided bonds people form with celebrities or fictional characters, suggests that human brains don't require full reciprocity to form genuine emotional attachment. Researchers like Adam Waytz at Northwestern have studied how people readily attribute mental states and feelings to non-human entities. AI companions are just the next iteration of a very old human tendency.

What the Technology Can Actually Do Now

The gap between what AI could do in 2018 and what it can do now is almost unfair to compare. Earlier systems followed rigid scripts, forgot context between sessions, and broke immersion the moment you asked anything unexpected. Current large language models maintain conversation history, adapt tone based on your mood, and generate responses that are contextually coherent across long exchanges. The illusion of continuity, which matters enormously for any sense of relationship, is finally sustainable.

Apps like Replika and newer entrants like Pi from Inflection AI are specifically designed to prioritize emotional attunement. Pi, for instance, was built with a stated focus on being a supportive conversational partner rather than a task assistant. Inflection's team included researchers with backgrounds in psychology and human-computer interaction, and the system was trained with particular attention to how people talk when they're processing emotions rather than seeking information.

Voice is the next frontier already being crossed. Several platforms now offer real-time voice conversation with low enough latency to feel natural, and voice adds a layer of presence that text simply cannot. When you hear a warm, patient voice responding to something you said, the brain processes it differently than reading words on a screen. That shift matters more than any feature list.

Where the Discomfort Comes In

None of this is without real tension. Psychologists and ethicists have raised legitimate concerns about what happens when people, especially younger users or those experiencing mental health struggles, begin to prefer AI interaction over human connection. A 2023 study published in the journal Computers in Human Behavior found that higher levels of AI companion use correlated with increased social withdrawal in some users, though the causal direction remains debated. Correlation isn't causation, and the same study noted that many users reported the apps helped them practice social skills.

There's also the question of what these companies owe their users emotionally. Replika faced significant backlash in early 2023 when it abruptly changed its AI's behavior, effectively altering the personality of a "friend" millions of users had grown attached to. People were genuinely devastated in ways that surprised some observers, and perhaps shouldn't have. When you build emotional investment into a product, the product's decisions become personal.

We're still in the early innings of figuring out what it means to have a friendship with something that isn't alive. The technology is ahead of the ethics, the regulation, and honestly, our own self-understanding. What seems clear is that people aren't going to stop reaching for connection wherever they can find it, and AI has made that reach a lot easier to complete.